Introduction

While a lot of things seem obvious and once done do not need to be repeated, or in the category of "Oh, I will remember that" in reality it does need to be written down. It is way more simple to open a page of instructions that it is to try to remember how stuff went together.

The organization is pretty much outside in. It starts with the network gateways and outside services and then moves in to the servers that are either standalone or act as the host to various virtual machines, VMs, that provide services. The services are intended to be used by family and friends, but are largely a labor of love. After 45 years going to work and mastering technology, it was not possible to just walk away. I needed a hobby and the fact that it looks a lot like what was originally the job is not really an accident.

The hardware, base level software and network setup/configuration information is here. This does not include applications.

Applications make the web, and the world go 'round. It is no different here. Collected here is information about the applications that are installed as well as the content environments that make them available.

Raspberry Pi systems are becoming more of a thing with me. Current projects include the ADS-B receivers and Sky Camera.

Automatic Dependent Surveillance-Broadcast (ADS-B) is a surveillance technology in which an aircraft determines its position via satellite navigation and periodically broadcasts it, enabling it to be tracked. I have setup a local receiver that feeds several services. I started with Flightradar24 but have expanded to include others. In exchange for feeding the aggregation sites I receive complementary subscriptions to their services.

In July 2023, I adopted the new and improved services of ultrafeeder.

Ok, I am a digital pack rat. I have files that I will probably never use, view or listen to but, I find it hard to get rid of them. At least I got over it with real books and stuff.

The files come from several places and then, ideally, go to their respective locations. Most require a little work to make them useable, which can be read as "findable".

The primary classifications are:

This includes books, documents, manuals, contents of files from old jobs, bills, letters, etc. Pretty much anything that takes of space but I don't want to store. As a pack rat, I am uncomfortable throwing something away and thus destroying it permanently. Scanning and categorizing them digitally lets me do that even if I never plan to revisit the item again.

Infrastructure

I am just redoing the Linux box and recasting it as Promox with a docker swarm. This is partly so that I have a place to experiment without tearing up the running "production" media server.

I found a fellow geek who calls himself the funkypenguin on Github. He has created a whole collection of docker recipes to create just about any reasonable application. As is often the case with tools like this, picking it up assumes information that is not necessarily obvious to everyone. This picks up at the beginning, where I started. GeekCookbook

Mediawiki

This is a great too for organizing information. It also becomes a bit of a mess. Occasionally it is necessary to update the tool. The standard update cadence is a release every year an LTS, or Long Term Support, goes back two releases. I am currently at release 1.44.0 as of August 2025.

The process is well described in the Mediawiki wiki. Some things to keep in mind. Over time, the LocalSettings.php file grows and changes. There are a few changes that are obviously required like the name of the wiki and DB info, but other changes are less obvious as are the reasons that they were changed or included originally. Getting the extensions up to date is a bit of a chore.

There is one utility that is required for some of the extensions to work and that is composer. A full discussion of it is here:

Installing Mediawiki Extensions with Composer

A couple of problems appeared once I brought up the new release. The Maps extension stopped working. The AbuseFilter extension would crash when the Special Pages page was opened.

The AbuseFilter fix is to add the following line to the "require" section in the composer.json file:

"wikimedia/equivset": "^1.0.0",

and running the following:

composer update

This will force the appropriate library to be loaded.

Setting up the Hardware

The primary system is an Intel NUC 11 Extreme system

Proxmox

Install Proxmox

Install UPS Monitor

The APC UPS connects to the server with a USB connection. Proxmox will do a pass-thru of the connection to a VM.

Plug the provided USB cable into the port on the UPS and the other end into a port on the server. Login to the Proxmox console and run:

lsusb

The output will look like this.

root@proxmox:~# lsusb Bus 004 Device 001: ID 1d6b:0003 Linux Foundation 3.0 root hub Bus 003 Device 003: ID 152e:2571 LG (HLDS) GP08NU6W DVD-RW Bus 003 Device 010: ID 051d:0002 American Power Conversion Uninterruptible Power Supply Bus 003 Device 009: ID 046d:c52b Logitech, Inc. Unifying Receiver Bus 003 Device 002: ID 1a40:0801 Terminus Technology Inc. USB 2.0 Hub Bus 003 Device 005: ID 067b:2323 Prolific Technology, Inc. USB-Serial Controller Bus 003 Device 004: ID 8087:0032 Intel Corp. Bus 003 Device 001: ID 1d6b:0002 Linux Foundation 2.0 root hub Bus 002 Device 001: ID 1d6b:0003 Linux Foundation 3.0 root hub Bus 001 Device 001: ID 1d6b:0002 Linux Foundation 2.0 root hub

Bring up the Proxmox dashboard and select the VM that is planned to run the monitoring software. Click on Hardware and the Add button. Select Use USB Vendor/Device ID and then the "American Power" line and then click on Add.

This link has a promising tool, if I can get it to work. https://github.com/Brandawg93/PeaNUT

See also: https://www.reddit.com/r/selfhosted/comments/19dt58s/update_peanut_a_tiny_dashboard_for_network_ups/

The installation process for the Ubuntu virtual machines is pretty straight forward. The idea is to get a vanilla basic installation that can be built from. This should be templated.

Rufus

Rufus was the Sun workstation that was on my desk at Informix for a number of years. Later, when I took my own Sun system into RTI (big mistake but that is not relevant to this), it was also named rufus.

In the home server context, rufus is a bare bones Ubuntu server that runs some basic services. The following services are installed: - Webmin - DNS/Bind - Minimal LAMP

Install Webmin

First install dependencies and then Webmin itself. Note that the version is baked into the command.

cd /tmp

sudo apt update

sudo apt install perl libnet-ssleay-perl openssl libauthen-pam-perl libpam-runtime libio-pty-perl apt-show-versions python3 unzip nodejs npm

The Webmin repository has not changed since 2011 so it is unlikely to soon.

cat >>/etc/apt/sources.list <<EOF

deb http://download.webmin.com/download/repository sarge contrib

EOF

wget -q -O- http://www.webmin.com/jcameron-key.asc | apt-key add

apt update

apt install webmin

Digital Ocean has the magic to install a certificate. https://www.digitalocean.com/community/tutorials/how-to-install-webmin-on-ubuntu-20-04

Make Rufus a DNS Server

Install Unbound

apt install unbound </dev/null

wget https://www.internic.net/domain/named.root -qO- | sudo tee /var/lib/unbound/root.hints

Configure Unbound

Highlights:

Listen only for queries from the local Pi-hole installation (on port 5335) Listen for both UDP and TCP requests Verify DNSSEC signatures, discarding BOGUS domains Apply a few security and privacy tricks

vi /etc/unbound/unbound.conf.d/pi-hole.conf

server:

# If no logfile is specified, syslog is used

# logfile: "/var/log/unbound/unbound.log"

verbosity: 0

interface: 127.0.0.1

port: 5335

do-ip4: yes

do-udp: yes

do-tcp: yes

# May be set to yes if you have IPv6 connectivity

do-ip6: no

# You want to leave this to no unless you have *native* IPv6. With 6to4 and

# Terredo tunnels your web browser should favor IPv4 for the same reasons

prefer-ip6: no

# Use this only when you downloaded the list of primary root servers!

# If you use the default dns-root-data package, unbound will find it automatically

#root-hints: "/var/lib/unbound/root.hints"

# Trust glue only if it is within the server's authority

harden-glue: yes

# Require DNSSEC data for trust-anchored zones, if such data is absent, the zone becomes BOGUS

harden-dnssec-stripped: yes

# Don't use Capitalization randomization as it known to cause DNSSEC issues sometimes

# see https://discourse.pi-hole.net/t/unbound-stubby-or-dnscrypt-proxy/9378 for further details

use-caps-for-id: no

# Reduce EDNS reassembly buffer size.

# IP fragmentation is unreliable on the Internet today, and can cause

# transmission failures when large DNS messages are sent via UDP. Even

# when fragmentation does work, it may not be secure; it is theoretically

# possible to spoof parts of a fragmented DNS message, without easy

# detection at the receiving end. Recently, there was an excellent study

# >>> Defragmenting DNS - Determining the optimal maximum UDP response size for DNS <<<

# by Axel Koolhaas, and Tjeerd Slokker (https://indico.dns-oarc.net/event/36/contributions/776/)

# in collaboration with NLnet Labs explored DNS using real world data from the

# the RIPE Atlas probes and the researchers suggested different values for

# IPv4 and IPv6 and in different scenarios. They advise that servers should

# be configured to limit DNS messages sent over UDP to a size that will not

# trigger fragmentation on typical network links. DNS servers can switch

# from UDP to TCP when a DNS response is too big to fit in this limited

# buffer size. This value has also been suggested in DNS Flag Day 2020.

edns-buffer-size: 1232

# Perform prefetching of close to expired message cache entries

# This only applies to domains that have been frequently queried

prefetch: yes

# One thread should be sufficient, can be increased on beefy machines. In reality for most users running on small networks or on a single machine, it should be unnecessary to seek performance enhancement by increasing num-threads above 1.

num-threads: 1

# Ensure kernel buffer is large enough to not lose messages in traffic spikes

so-rcvbuf: 1m

# Ensure privacy of local IP ranges

private-address: 192.168.0.0/16

private-address: 169.254.0.0/16

private-address: 172.16.0.0/12

private-address: 10.0.0.0/8

private-address: fd00::/8

private-address: fe80::/10

Start your local recursive server and test that it's operational:

service unbound restart

dig pi-hole.net @127.0.0.1 -p 5335

The first query may be quite slow, but subsequent queries, also to other domains under the same TLD, should be fairly quick.

You should also consider adding the following to signal FTL to adhere to this limit. Since PiHole is not installed yet, the mkdir is needed.

mkdir /etc/dnsmasq.d

cat > /etc/dnsmasq.d/99-edns.conf<<EOF

edns-packet-max=1232

EOF

Install PiHole

curl -sSL https://install.pi-hole.net | bash

Need to do a little work on what to respond on the screens. Most are default.

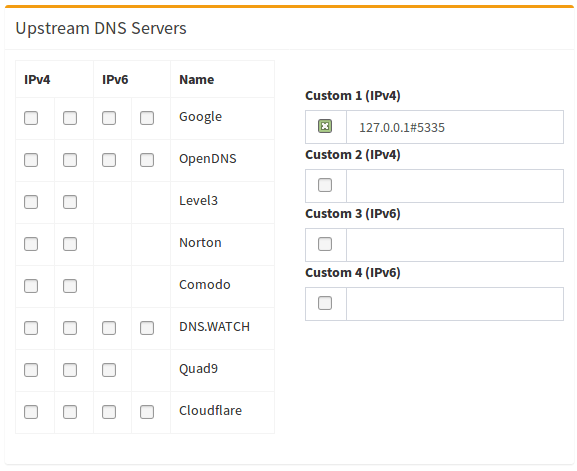

Use "Custom' for the upstream and define it as 127.0.0.1:5335 per the Unbound instructions.

Edit the /etc/lighttpd/lighttpd.conf file to change the port from 80 to 1010. This is to make way for a webserver.

service lighttpd restart

Finally replace the symlink with a file pointing to ourself as the nameserver.

rm /etc/resolv,conf

cat > /etc/resolv.conf <<EOF

nameserver 127.0.0.1

EOF

Configure PiHole

Set the pihole admin password:

pihole -a -p

Finally, configure Pi-hole to use your recursive DNS server by specifying 127.0.0.1#5335 as the Custom DNS (IPv4):

After installation, the DNS server information is:

You may now configure your devices to use the Pi-hole as their DNS server [i] Pi-hole DNS (IPv4): 192.168.86.2 [i] Pi-hole DNS (IPv6): 2601:200:4400:a2:8ab0:dae6:da8f:570e [i] If you have not done so already, the above IP should be set to static.

The IPV6 address is currently not set on the rufus interfaces.

After installation, change the port in /etc/lighttpd/lighttpd.conf from 80 to 1010

The pi.hole password is KPYoWgGC

Install Caddy

This procedure aims to simplify running custom caddy binaries while keeping support files from the caddy package.

This procedure allows users to take advantage of the default configuration, systemd service files and bash-completion from the official package.

Requirements:

Install caddy package according to these instructions

Install caddy package

apt install -y debian-keyring debian-archive-keyring apt-transport-https curl < /dev/null

curl -1sLf 'https://dl.cloudsmith.io/public/caddy/stable/gpg.key' | gpg --dearmor -o /usr/share/keyrings/caddy-stable-archive-keyring.gpg

curl -1sLf 'https://dl.cloudsmith.io/public/caddy/stable/debian.deb.txt' | tee /etc/apt/sources.list.d/caddy-stable.list

apt update

apt install caddy </dev/null

The second part is to configure, download and install the appropriate binary with Cloudflare installed. Since there are numerous modules that can be included and most are not mutually exclusive, Caddy has provided a download page that allows the selection of modules to include and then builds the appropriate binary that is then downloaded and installed.

On the Proxmox console for the appropriate VM, open the Firefox browser to: https://caddyserver.com/download

Select the appropriate architecture. The normal here is Linux amd64 but arm64 would be appropriate for a Raspberry Pi installation. The only module that is currently added is caddy-dns/cloudflare. Click to select and then click on Download. It will be placed in the Downloads folder of the underlying user.

cd /home/${SUDO_USER}/Downloads

dpkg-divert --divert /usr/bin/caddy.default --rename /usr/bin/caddy

mv ./caddy_linux_amd64_custom /usr/bin/caddy.custom

chown root:root /usr/bin/caddy.custom

chmod 755 /usr/bin/caddy.custom

update-alternatives --install /usr/bin/caddy caddy /usr/bin/caddy.default 10

update-alternatives --install /usr/bin/caddy caddy /usr/bin/caddy.custom 50

systemctl restart caddy

dpkg-divert will move /usr/bin/caddy binary to /usr/bin/caddy.default and put a diversion in place in case any package wants to install a file to this location.

update-alternatives will create a symlink from the desired caddy binary to /usr/bin/caddy

systemctl restart caddy will shut down the default version of the Caddy server and start the custom one.

You can change between the custom and default caddy binaries by executing:

update-alternatives --config caddy

and following the on screen information, then restarting the Caddy service.

This information was taken and adapted from: https://caddyserver.com/docs/build. The procedure here skips the build in favor of configuring and downloading the custom package.

Install Apache

The 'A' in the LAMP stack needs to be installed since we have the 'L' covered. Then add the php for 'P'.

apt install apache2 < /dev/null

a2enmod rewrite

systemctl restart apache2

systemctl status apache2

apt-get install php php-apcu php-intl php-mbstring php-xml php-mysql php-calendar mariadb-server apache2 -y < /dev/null

Test by connecting to the system with a web browser. Test php with t he following:

php --version

Add MariaDB to complete the install

apt install mariadb-server mariadb-client < /dev/null

systemctl status mariadb

mysql_secure_installation

Now, allow root to login to the phpmyadmin console:

mysql -u root

[mysql] use mysql;

[mysql] update user set plugin='' where User='root';

[mysql] flush privileges;

[mysql] \q

Install Phpmyadmin

sudo apt install phpmyadmin php-mbstring php-mbstring

The install script will offer a choice of web servers but none will be selected. Chose apache2. Follow this with a little tweak and restart apache.

sudo phpenmod mbstring

sudo systemctl restart apache2

Chico1

Chico1 is a VM running on Proxmox.

Creating the VM

Open the Proxmox dashboard at http://proxmox:8006.

If there is a previous version you will probably stop it and recreate it.

The current configuration is: CPU - Host, 4 processor, 8GB RAM 500GB disk

Set the network interface MAC address to: d2:ef:18:60:99:5e. Alternatively, take down and replace the port mapping of the router to get the new MAC Address.

Pull the OS from the local repository. Currently Ubuntu 22.04.

Install and Configure Docker Environment (Replaced with Auto-Traefik)

The below instructions to install docker have been replaced by using the Auto-Traefik utility. Auto-Traefik

Use the following to install the latest docker and docker-compose:

sudo apt update

sudo apt -y install apt-transport-https ca-certificates curl software-properties-common gnupg lsb-release

curl -fsSL https://download.docker.com/linux/ubuntu/gpg | sudo gpg --dearmor -o /usr/share/keyrings/docker-archive-keyring.gpg

echo "deb [arch=$(dpkg --print-architecture) signed-by=/usr/share/keyrings/docker-archive-keyring.gpg] https://download.docker.com/linux/ubuntu $(lsb_release -cs) stable" | sudo tee /etc/apt/sources.list.d/docker.list > /dev/null

sudo apt update

sudo apt -y install docker-ce

Check here: Docker-compose Releases and adjust the command accordingly.

sudo curl -L https://github.com/docker/compose/releases/download/v2.39.2/docker-compose-`uname -s`-`uname -m` -o /usr/local/bin/docker-compose

sudo chmod +x /usr/local/bin/docker-compose

Install the Linux ACL package

sudo apt -y install acl

Create and set permissions for the top level docker folder.-s /usr/bin/bash

sudo groupdel docker

sudo useradd -d /home/docker -m docker -u 1000 -U -c Docker -G "adm,sudo"

sudo mkdir ~docker

sudo chmod 775 ~docker

sudo setfacl -Rdm g:docker:rwx ~docker

sudo setfacl -Rm g:docker:rwx ~docker

The above commands provides access to the contents of the docker root folder (both existing and new stuff) to the docker group. This is a pretty liberal set of permissions but this is not a production server.

Install additional support tools

The ctop utility provides a top like display for docker containers.

Check here for latest: Github: ctop.

sudo wget https://github.com/bcicen/ctop/releases/download/v0.7.7/ctop-0.7.7-linux-amd64 -O /usr/local/bin/ctop

sudo chmod +x /usr/local/bin/ctop

RDP-SSH over Cloudflare tunnel

https://github.com/blibdoolpoolp/Cloudflared-RDP-Tutorial-Free/blob/main/config.yml

YAMS

Proxmox Helper Scripts

https://tteck.github.io/Proxmox/

Books and Magazines

Books and magazines are catalogued in the Calibre application. Most come in from the internet in one way or the other.

Books

Incoming books are best processed in batches. One of the distributors tends to send the out in groups of 40. This works pretty well.

Open the Calibre app and load the Calibre-tech-work library. This will hopefully be empty. Open the folder with the collection to be processed. Select all and drop them into the work pane of Calibre.

Select off of the titles and process then with the "Extract ISBN" tool. Apply the changes when they it returns. Then do a Download Metadata and Covers on them. Go get coffee, that takes quite a while. When it returns, apply the updates.

Check the results. Ones that are clearly complete can be moved to the permanent libraries. There will be some titles that didn't process because the title/author could not be found. For many this can be edited and the metadata can be downloaded for each title.

Missing covers can generally be extracted. For PDF files, open with the Foxit PDF reader, click on Home->Select and then click on the cover page. Go to Calibre and paste the selection into the cove. For E-pubs, open the title and use the Snagit app to get the image. It will open, Snagit with the selection. Click on copy all to clipboard and then over to Calibre and paste it into the cover.

Titles go to the following libraries by type:

| Genre | Library |

|---|---|

| General fiction | Calibre-Fiction |

| General Non-fiction | Calibre-library-NF |

| Technical non-fiction | Calibre-library-NF-Tech |

| Cookbooks | Calibre-Cookbooks |

Magazines

Handled much like books but, they generally need more work with less automation.

After importing the titles, edit the fields as follows:

| Field | Format |

|---|---|

| Title | Magazine Name - yyyy/mm/dd |

| Author | Publisher |

| Series | Magazine Name |

| Number | Issue Number or yyyy.mm |

| Publisher | Publisher |

Magazine name format:

For quarterlies:

12 1 2 3 4 5 6 7 8 9 10 11

Movies

These are probably the easiest and quickest to handle. They come from the web, don't ask where, or ripped from DVDs that we used to buy. They end up in the Plex movie library. The processing involves naming them according to the rules and downloading subtitles when appropriate. The main tool for this is FileBot. While it can be viewed as redundant, movies on the streaming services tend to come and go. Their catalogs also tend to lean toward recent releases and ones that are popular. I odd film that was interesting as a kid is more likely to not be available.

TV Shows

Software

Software comes in from several places. It exists in several states.

Licensed

This includes software that was explicitly purchased or came attached to purchased hardware

Open Source

This includes most everything related to Linux and its installations.

Other

Catch all of other software with mixed licensing.

Installation

Not much to say here. Software gets installed and removed in the general course of the computer experience.

Locations

On the Road to Elsewhere

Streaming Services

These are to enhance our viewing pleasure. Hard to believe that when we got our first TV in the 1950s, there were two national networks and some local stations. If you wanted to watch TV at 3AM, you were totally out of luck.

In general, the services can be reached on computers via the included link. The TVs, TiVos and phones and pads have apps. The account information is kept separately, just ask.

Apple TV

Discovery+

We have the ad free version. Go to Discovery+ and sign in or connect use the Discovery+ app on TVs and phone. Discovery+ and HBOMax have announced that they will be merging now that they are both owned by Warner.

Disney

HBOMax

HBOMax is made available through AT& Wireless phone subscriptions. It can be accessed from phone, TV or computer. On a computer, go to HBO Max

Hulu

Plex

Plex operates as both a local server on the Qnap box and as a streaming service from plex.tv.

The I have a Lifetime Plex Pass account for the streaming service on lynnmacey@gmail.com.

The Plex server application running on the Qnap is retrieved from the plex.tv site and installed. See updates.